|

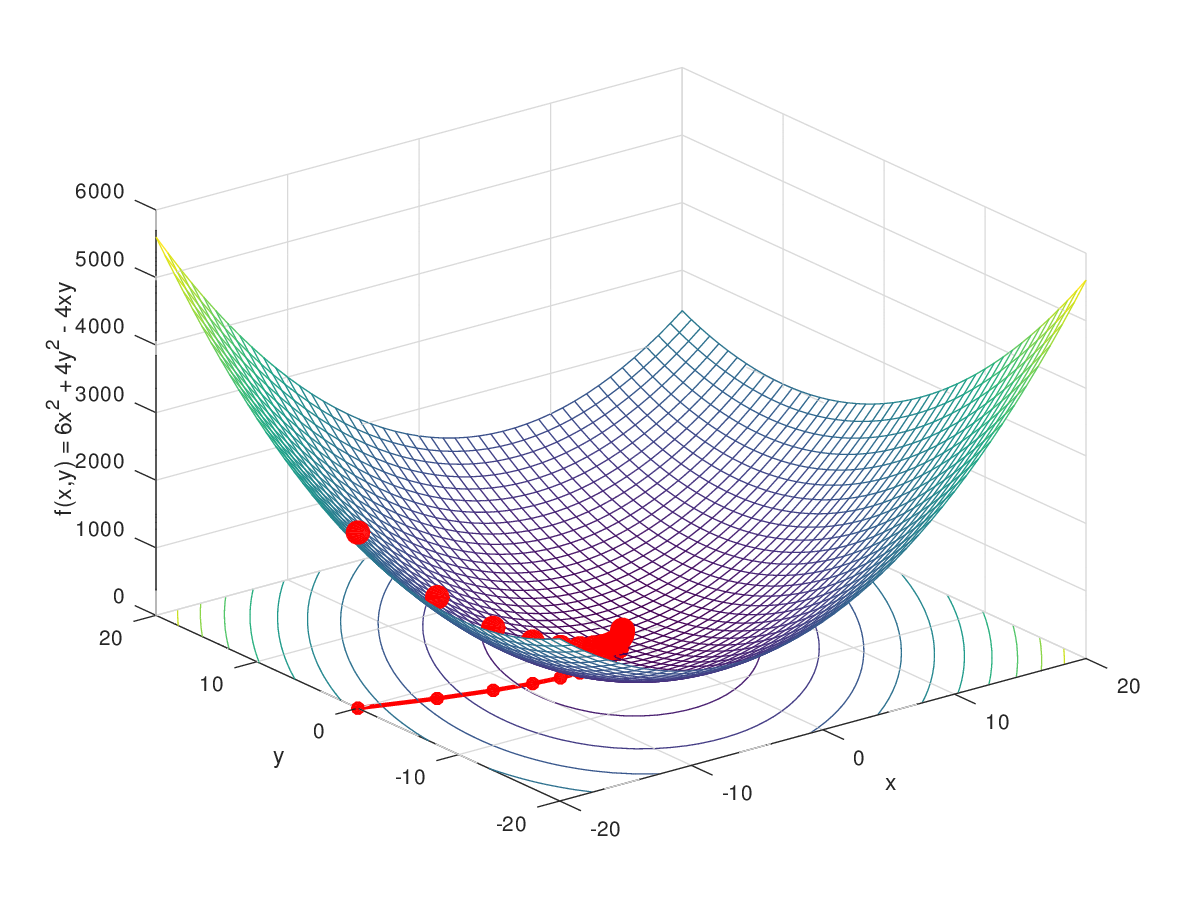

2/28/2023 0 Comments Gradient descent If we consider, Simple Gradient Descent completely relies only on calculation i.e. In simple words, every step we take towards minima tends to decrease our slope, now if we visualize, in steep region of curve derivative is going to be large therefore steps taken by our model too would be large but as we will enter gentle region of slope our derivative will decrease and so will the time to reach minima. It does not store any personal data.Contour maps visualizing gentle and steep region of curve, Source The cookie is set by the GDPR Cookie Consent plugin and is used to store whether or not user has consented to the use of cookies. The cookie is used to store the user consent for the cookies in the category "Performance". This cookie is set by GDPR Cookie Consent plugin.

The cookie is used to store the user consent for the cookies in the category "Other. The cookies is used to store the user consent for the cookies in the category "Necessary". The cookie is set by GDPR cookie consent to record the user consent for the cookies in the category "Functional". The cookie is used to store the user consent for the cookies in the category "Analytics". These cookies ensure basic functionalities and security features of the website, anonymously. Necessary cookies are absolutely essential for the website to function properly. If you want more content like this, join my email list to receive the latest articles. If you have any additional questions, you can reach out to or message me on Twitter.

I’d appreciate it if you can simply link to this article as the source. I hope this information was of use to you.įeel free to use any information from this page. If you made this far in the article, thank you very much. Performance of each variant to reach a Global Minimum Also, because the update phase in SGD and mini-batch GD is significantly more computationally efficient, you often run 100s-1000s of updates between checks for termination conditions being satisfied. In most cases, you make numerous rounds over the training data until the termination requirement is reached. Mini-batch GD is commonly employed because computational infrastructure - compilers, CPUs, and GPUs - are frequently tuned for vector additions and vector multiplications.įrom above, SGD and mini-batch GD are the most popular. M is frequently in the 30–500 range, depending on the situation. That is, you divide the training data into tiny groups initially. In mini-batch gradient descent, the gradient calculates for each little mini-batch of training data. Scientist just love their complicated words

The gradient produced in this manner i s a stochastic approximation to the gradient produced using the whole training data.Įach update is now considerably faster to calculate than in batch gradient descent, and you will continue in the same general direction over many updates. The gradient for each update is computed using a single training data point x in stochastic gradient descent (SGD). It would help if you calculated the gradients for the whole dataset to execute a single parameter update. Batch gradient descent may be exceedingly slow. A dataset may contain millions of data points, making computing the gradient throughout the whole dataset computationally tricky.įor the whole training data set, batch gradient descent computes the cost function gradient for parameter W. The primary cause for these differences is computing efficiency. Gradient descent has several forms depending on how much of the data is utilized to generate the gradient. When employing a gradient descent technique, make sure that all features have the same scale otherwise, the process will take a long time to converge.

However, if the learning rate is very high, the method will split with greater values, which will aid in the discovery of a good solution. If the learning rate is low, the algorithm will have to go through many iterations before it can converge, which will take a very long time. The learning rate determines the size of the steps, which is an essential parameter in this method. It operates by iteratively tweaking the parameters to minimize the cost function. Gradient Descent is an optimization approach in Machine Learning that may identify the best solutions to a wide range of problems. In this article, I will simply give you a brief overview of what is gradient descent and what are some gradient descent variations. Gradient descent in Neural Networks is quite common and it is one of the first things to learn during your machine learning journey. Indeed, the optimization/learning phase of Neural Network (backpropagation) use gradient descent as a method to update the various weight. Gradient Descent is a term that you will often hear in Neural Network.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed